-

Data Mesh on Databricks (Part 1)

In this post we will introduce the data mesh concept and the Databricks capabilities available to implement a data mesh.

Courtesy :- Databricks Blogs

-

Data Mesh on Databricks (Part 2)

This blog will explore how the Databricks Lakehouse capabilities support Data Mesh from an architectural point of view.

Courtesy :- Databricks Blogs

-

Modeling best practices for Databricks Lakehouse

Databricks Lakehouse is a scalable cloud platform that combines the best of what data warehouses and data lakes have to offer.

Courtesy :- SqlDBM, Medium

-

Dimensional Data Modelling for Big Data

Dimensional Data modelling has been a popular and effective approach for designing data warehouses and enabling business intelligence.

Courtesy :- ScholarNest

-

Well-Architected Data Lakehouse

Well-architected frameworks for cloud services are collections of best practices, design principles, and architectural guidelines that help organisations design, build, and operate reliable, secure, efficient, and cost-effective systems in the cloud.

Courtesy :- Databricks Blogs

-

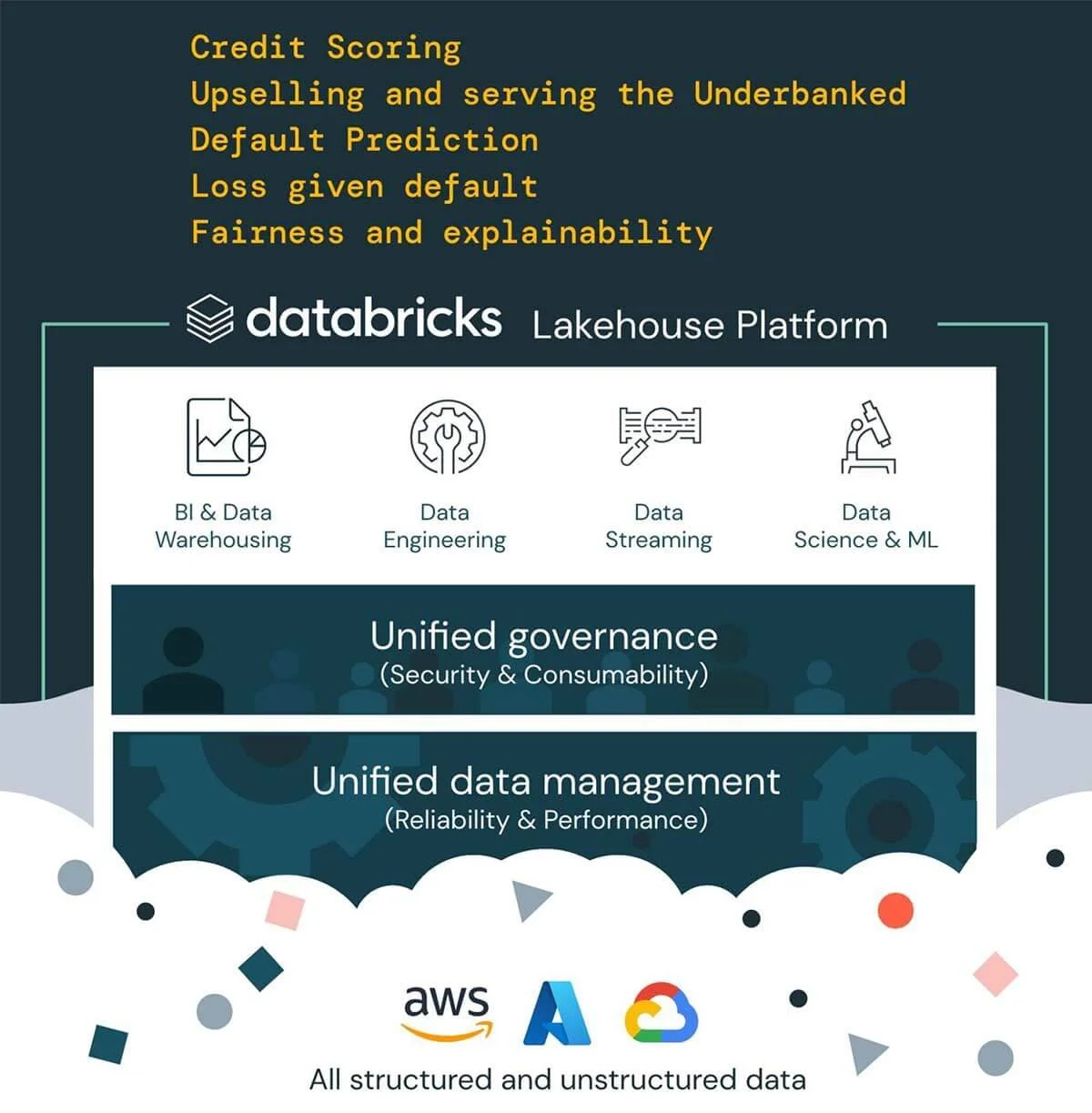

Credit Data Platform on the Databricks Lakehouse

This design offers a credit data platform that provides a holistic and efficient solution to the credit decisioning process. The platform can enable data integration, audit, AI-powered decisions, and explainability, providing a single source of truth.

Courtesy :- Databricks Blogs

-

Database Modeling: Relational vs. Transformational

When I hear developers say “I don’t need relational modeling, I can do all the joins myself,” what I really hear is, “I don’t need a map. I know my way around.” Which is often true — until it isn’t.

Courtesy :- SqlDBM, Medium

-

What Can SqlDBM Do For You?

SqlDBM is an online, collaborative data modeling and diagramming tool. Used by thousands of companies worldwide, providing them clarity and efficiency in visualizing & developing their data models. Practical use case could demonstrate its potential.

Courtesy :- SqlDBM, Medium

-

Data Mesh — Delivering Data-Driven Value at Scale by Zhamak Dehghani

The book is logically well structured into 5 parts. What, Why and How of data mesh concept, architecture, and implementation. The How part is more detailed understandably focusing on how to design the core data mesh architecture and how to design the Data product architecture.

Courtesy :- Writer Venkataraman Balasubramanian on Medium

-

It’s Time for Streaming Architectures for Every Use Case

In today's data-driven world, organizations face the challenge of effectively ingesting and processing data at an unprecedented scale. With the amount & variety of business-critical data constantly being generated, the architectural possibilities are nearly infinite & can be overwhelming. The good news?

Courtesy :- Databricks Blogs

-

What is a Data Intelligent Platform

How AI will fundamentally change data platforms and how data will change enterprise AI? The observation that "software is eating the world" has shaped the modern tech industry. Today, software is ubiquitous in our lives, from the watches we wear, to our houses, cars, factories and farms. At Databricks, we believe that soon, AI will eat all software.

Courtesy :- Databricks Blogs

-

What is a Data Observability Platform?

Data observability refers to an organization’s comprehensive understanding of the health and performance of the data within their systems. Data observability tools employ automated monitoring, root cause analysis, data lineage, and data health insights to proactively detect, resolve, and prevent data anomalies.

Courtesy :- Monte Carlo Data

-

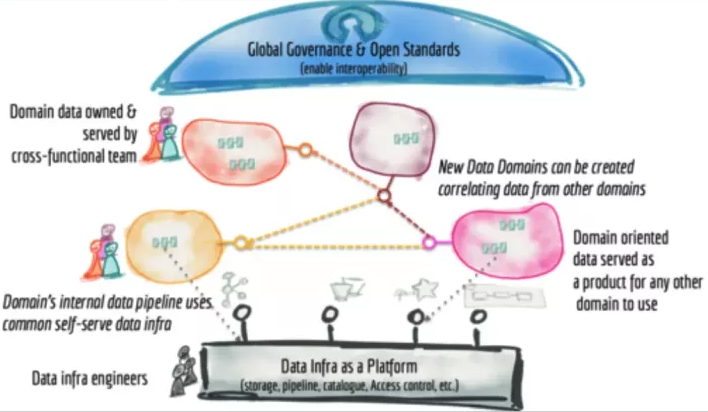

How Not to Mesh it Up

Ask anyone in the data industry what’s hot these days and chances are “data mesh” will rise to the top of the list. But what is a data mesh and why should you build one? Inquiring minds want to know.

In the age of self-service business intelligence, nearly every company considers themselves a data-first company, but …

Courtesy :- Monte carlo Data

-

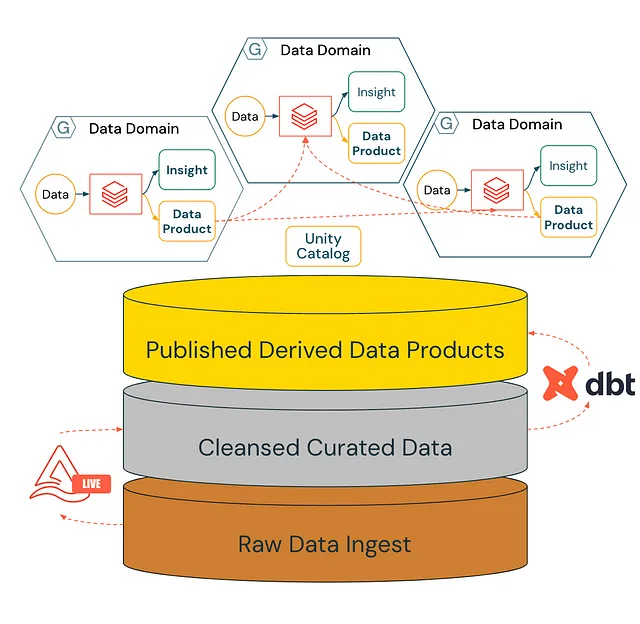

Creating a Mesh with Medallion

This article wants to express a point of view on how the emerging paradigm of Data Mesh can coexist with one of the most common modern data architectures, Medallion.

A medallion architecture is a data design pattern used to logically organize data in a lakehouse, with the goal of …

Courtesy :- Nicola De Seta on Medium

-

The emergence of the Medallion Mesh

Most large organizations have realized that Data Mesh addresses significant bottlenecks in delivering solutions. The Medallion architecture pattern, emerging from the shortcomings of schema-on-read attempts with Hadoop, offers an alternative.

This pattern ensures data is neatly organized and ready for use.

Courtesy :- Franco Patano on Medium

-

Why Databricks is at the Goldilocks Zone in the Data Universe?

In the expansive universe of Data Technology, imagine a Goldilocks Zone where a data platform orbits just at the right distance from cloud hyperscalers and does not lock its customer organisations to a single hyperscaler and supports concurrent multi-cloud hosting.

Courtesy :- Devi Acharya on Medium

-

Migrating to Databricks from Redshift

why we are confident of the advantages Databricks has against Redshift and why organizations should consider a strategic migration to position their business for success with analytics and AI.

Courtesy : - Bentley Ave Data Labs on Medium

-

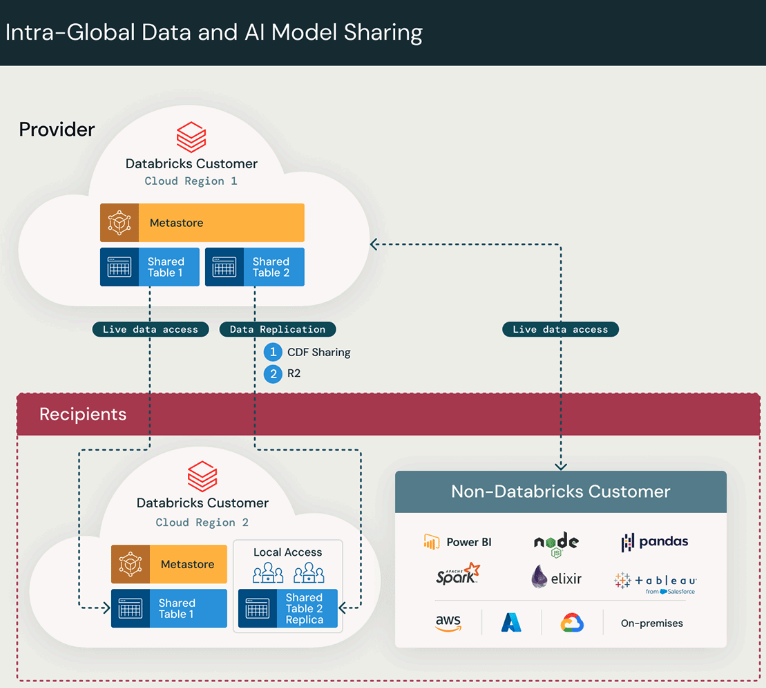

Architecting Global Data Collaboration with Delta Sharing

Enable your organization to scale by securely and efficiently sharing data across clouds, platforms, and regions. Presenting data replication options within Delta Sharing by exploring architecture guidance

Courtesy :- Databricks Blogs

-

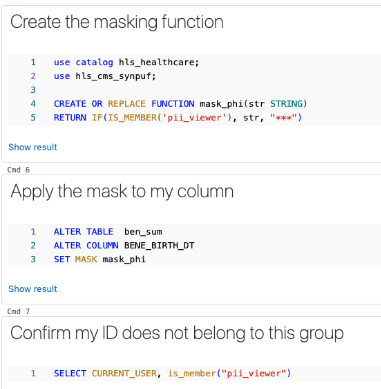

Automating Governance of PHI Data in Healthcare

Today's enterprise data estates are vastly different from 10 years ago. Industries have transitioned their analytics from monolithic data platforms to distributed, scalable, and almost limitless compute and storage capabilities.

Courtesy :- Databricks Blogs

-

Streaming with SQL on Databricks

How to use SQL to simplify streaming pipeline development and management with Databricks’ Delta Live Tables (DLT). DLT is a managed service from Databricks designed for building reliable data pipelines for batch and streaming use cases.

Courtesy :- Hosea Kidane on Medium

-

Unity Catalog Governance Value Levers

Databricks UnityCatalog is the industry’s only unified governance solution for data and AI. Governance ensures data and AI products are consistently developed and maintained, adhering to precise guidelines and standards. It's the blueprint for architects, bringing their solutions and data vision to life with consistency, guidelines, and standards.

Courtesy :- Databricks Blogs

-

Power of Gen AI with Databricks SQL

AI functions are built-in DB SQL functions allowing you to access Large language Models (LLM) directly from SQL. Previously, data scientists used Databricks Model Serving to deploy and manage all AI models: e.g. Foundational models (Llama, MPT, Mixtral) or External Model (Open AI, AWS Bedrock, Anthropic, AI21 etc.). The need to enable SQL Analysts to use generative AI at work is apparent.

Courtesy :- Yatish Anand on Medium